Listen API Announcements

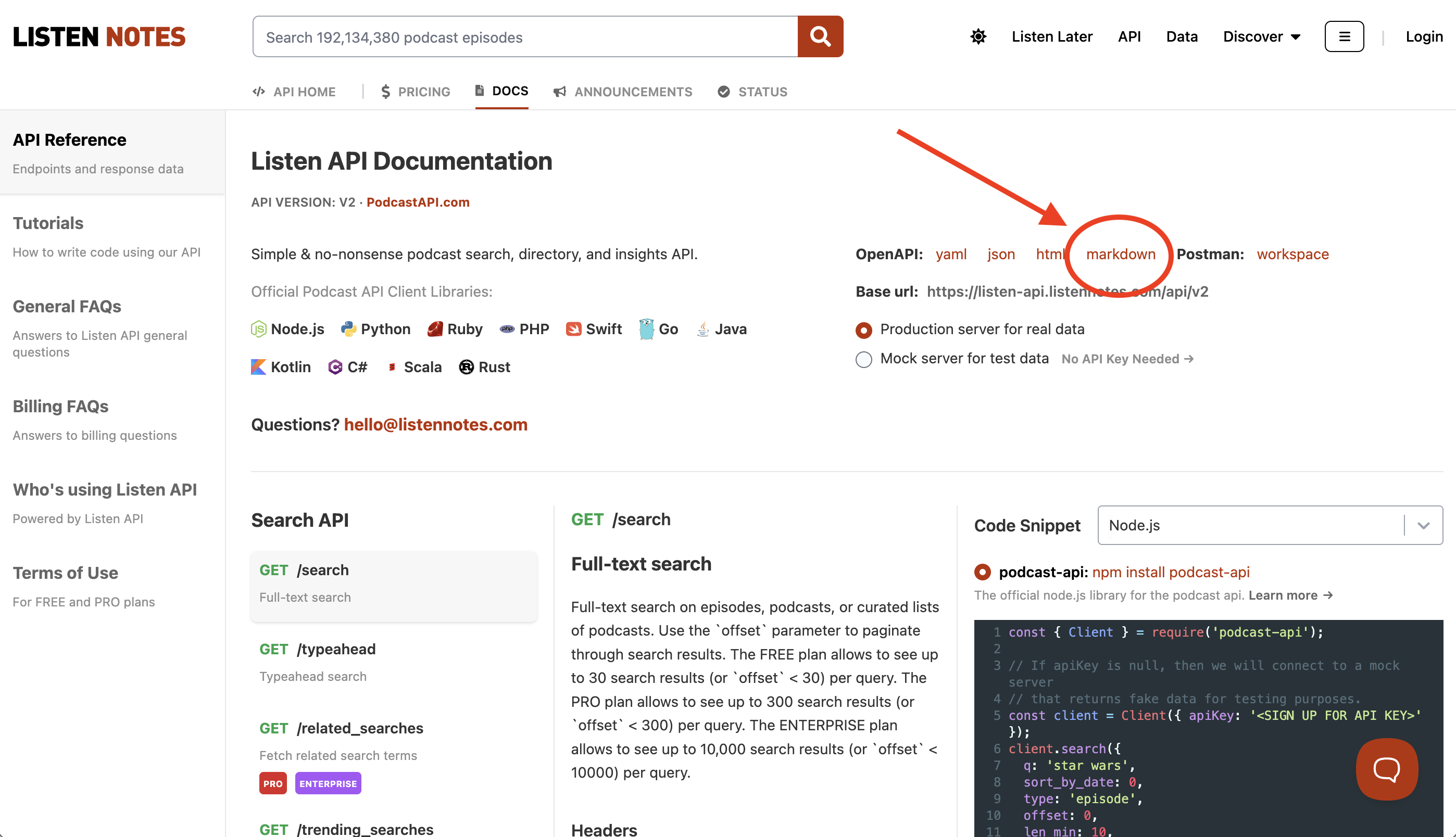

Copy to LLM: Podcast API Docs in Markdown 🔗

We just made it much easier to build with the Listen Notes Podcast API using AI. You can now access our entire API documentation as a single Markdown file at https://www.listennotes.com/api/docs/openapi.md

Whether you are using ChatGPT, Claude, or any other LLM, you can now simply copy-paste the entire documentation to give the AI full context. This allows your favorite AI assistant to help you write, debug, and optimize your code with perfect accuracy.

You can also find the markdown file at https://www.listennotes.com/api/docs/:

Removal of the "google_url" Field from API Responses 🔗

Google Podcasts has been discontinued since April 2, 2024. Consequently, we are updating our PodcastAPI.com to align with these changes. The "google_url" field (a sub-field of the "extra" field), previously used in our API responses to provide a link to Google Podcasts, will no longer be supported.

In detail, while API responses for existing users will retain the "google_url" field for backward compatibility, it will now return an empty string "". For any new API accounts created from this point forward, the "google_url" field will be completely absent from responses.

This adjustment ensures our API remains clean and efficient, avoiding links that lead to non-existent content.

Find Podcasts with Guest Interviews and Sponsors Easily 🔗

We're happy to share new updates that help you find podcasts with guest interviews and sponsors faster using our Podcast API.

New Update #1: two new data fields

We added two new data fields to the podcast metadata you get from our API:

- has_guest_interviews: This shows if there are guest interviews in the podcast (true or false).

- has_sponsors: This tells you if a podcast has sponsors (true or false).

Everyone using our API, whether you're on the FREE, PRO, or ENTERPRISE plan, can see these two fields from the response data of various endpoints, e.g., GET /podcasts/{id}, GET /best_podcasts, GET /search?type=podcast etc.

New Update #2: two new serach filters

We added two new parameters to the GET /search endpoint:

- interviews_only: Use this to only find podcasts with guest interviews. Put 1 for yes and 0 for no.

- sponsored_only: This helps you find only podcasts with sponsors. Use 1 for yes and 0 for no.

These search filters are for finding podcasts (make sure "type" is set to "podcast") and are available for PRO and ENTERPRISE plans.

We use AI to figure out if a podcast has guest interviews or sponsors. Sometimes we might get it wrong. If you see a mistake, you can help us fix it by updating the information on the podcast's page on listennotes.com (under the EDIT tab) - Example.

If you have any questions, feel free to ask.

Introducing new API endpoint GET /search_episode_titles 🔗

We are pleased to announce the addition of a new API endpoint: GET /search_episode_titles. This endpoint enables (relatively) precise searching of podcast episodes based solely on their titles. It's available across all API plans (FREE, PRO, ENTERPRISE). It has two optional parameters:

- podcast_id: Filter search within a specific podcast.

- podcast_id_type: Choose from listennotes_id, itunes_id, spotify_id, or rss.

Use Case Example:

This new endpoint facilitates features like "import an episode from other podcast apps to your app." Users can, for instance, import episodes from Spotify/Apple Podcasts and then fetch episode metadata (e.g., audio url) via Listen API.

How Does This Differ from GET /search?

- Simplicity: The new endpoint focuses solely on episode titles, eliminating the need for multiple parameter tuning.

- Performance: The dedicated function allows for optimized search performance, because it searches only episode titles, rather than searching multiple fields with GET /search.

- Future Improvement: Provides room for specific enhancements without complicating the existing GET /search endpoint.

Important Caveat:

While useful, this endpoint isn't a universal solution for matching known episodes across different apps due to the lack of unique episode IDs. Developers should implement a fallback (e.g., show a list of recent episodes of a podcast) or final confirmation mechanism for end-users.

SDK Update:

If you're using one of our language-specific SDKs (e.g., python, nodejs, php...), please update to the latest version to utilize this new feature.

We look forward to seeing the innovative ways you'll make use of this new capability. Thank you for your continued support and partnership.

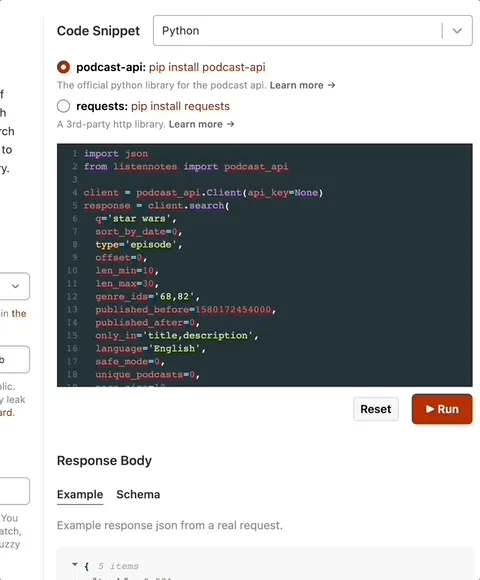

Run code snippets on the Docs page 🔗

Previously you can directly run node.js code on our API Docs page.

Now we support other languages, including Python, Java, Kotlin, Scala, Ruby, Go, Rust, PHP, and Shell. You can tweak and run the code without leaving the Docs page, and see the json results right there.

We do hope that all API documentation pages from other companies will follow suit, so developers can easily try out code snippets in the web browser. The developer UX is really important.

New API endpoint: GET /podcasts/domains/{domain_name} 🔗

We just added a new API endpoint to fetch podcasts by a publisher's domain name: GET /podcasts/domains/{domain_name}

For example, if you want to get a list of podcasts produced from nytimes.com, then you can do GET /podcasts/domains/nytimes.com.

Domain names are very useful join keys. If you already have a list of domain names (e.g., in a CRM), you can enrich the data with podcasts.

This new endpoint is available to all API plans, including FREE, PRO, and ENTERPRISE.

BTW - We've got a Chrome extension to see podcasts from a specific domain name, which is a good demo of this new API endpoint:

Fair Billing for the PRO plan 🔗

The PRO plan's pricing structure consists of two parts: a base fee US$180 and an overage fee (if any).

Previously (Dec. 2017 ~ Jan. 18, 2023), the base fee US$180 was mandatory even when 0 requests are used in a billing cycle (30 days).

From now on (Jan. 18, 2023 ~ now), the PRO plan subscribers will pay $0 if no any requests are used in a billing cycle (30 days). In other words, the base fee US$180 should be paid only when at least 1 requests are used in a billing cycle (30 days).

New parameter "unique_podcasts" for GET /search 🔗

We just added a new parameter "unique_podcasts=1" for GET /search (when type=episode), which asks Listen API to return only one episode per podcast in search results. This new parameter is available for all users (including the FREE plan).

Typically, episode search results include multiple episodes from the same podcast. In some cases, you may want to remove episodes of same podcasts from search results, keeping only one episode per podcast. For example, you may want to know what podcasts provide a specific promo code in any episode, but you don't care which episodes.

You can test "unique_podcasts" parameter on our website listennotes.com to see the difference of search results:

- Without unique_podcasts: https://lnns.co/wZ_RhWbP9Tp

- With unique_podcasts: https://lnns.co/HcvJDuhNRLo

New data fields: total_audio_length_sec and latest_episode_id 🔗

We just added two new data fields to the API response:

- total_audio_length_sec: Total audio length of all episodes in a playlist (in seconds). It's in the response of GET /playlists/{id} and GET /playlists. Available to all plans: FREE, PRO, and ENTERPRISE.

- latest_episode_id: The most recent episode's id of a podcast. It's in the response of any endpoints that have podcast objects. Available to PRO and ENTERPRISE plans.

btw - If you haven't used our Playlist API yet, you may want to try it out. It's like headless CMS - your human curators use our website to curate playlists, and your app uses our api to fetch structured data of playlists.

"rejected" status for podcast submission: POST /podcasts/submit / 🔗

The endpoint POST /podcasts/submit is typically used by a podcast hosting service to help podcasters one-button submit shows to Listen Notes.

We just added a "rejected" status in the response of POST /podcasts/submit, and a new webhook event "podcast.submit.rejected".

If a podcast submission is rejected, the webhook event "podcast.submit.rejected" will be triggered immediately (Setup webhooks here). Any subsequent requests to POST /podcasts/submit with the same rss feed will get a json response with "rejected" status.

If a submission was not accepted within 12 hours, you can also assume that it's rejected by us.

Typically, it's very obvious why a podcast is rejected if you read the podcast description (e.g., for blackhat seo link building, or violating our content policy…) or listen to the audio (e.g., robot voice…).

It's better NOT to re-submit a rejected rss from your end. Instead, you can ask your podcaster users to contact us directly if their shows are rejected: hello@listennotes.com

Look up podcasts by Spotify ids 🔗

We added a new parameter spotify_ids to POST /podcasts, for PRO and ENTERPRISE plans. You can fetch up to 10 podcasts by their Spotify ids. This could be helpful to help your users import their podcasts into your app, via Apple Podcasts (iTunes) ids, RSS urls, and Spotify urls.

As of today, ~50% of podcasts in our database have Spotify ids. We'll continue to add Spotify urls to our database.

You can see some code examples of using spotify_ids on our API Docs page: https://www.listennotes.com/api/docs/#post-api-v2-podcasts

Added 3 new data fields and 2 new search filters 🔗

We just added 3 new data fields to a podcast object for all API plans (FREE, PRO, and ENTERPRISE):

- audio_length_sec: The average audio length of all episodes of a podcast, in seconds.

- update_frequency_hours: How frequently does this podcast release a new episode? In hours. For example, if the value is 166, then it's every 166 hours (or weekly).

- amazon_music_url: A podcast's Amazon Music url.

We also added 2 new search filters to GET /search for all API plans (FREE, PRO, and ENTERPRISE):

- len_min & len_max: Filter podcasts by average audio length. For example, if you want to search a keyword and show podcasts whose average audio length is between 10 minutes and 30 minutes, then set len_min=10 and len_max=30 minutes.

- update_freq_min & update_freq_max: Filter podcasts by update frequency. For example, if you want to search a keyword and show podcasts whose update frequency is ~weekly, then set update_freq_min=144 (or 6 days) and update_freq_max=192 (or 8 days).

Learn more at: https://www.listennotes.com/api/docs/

Related searches, spellcheck, and trending searches 🔗

We just added three new search endpoints:

- GET /related_searches: Suggest related search terms. This is similar to the "Related searches" section on Google and other search engines - For PRO and ENTERPRISE plans.

- GET /spellcheck: Suggest a list of words that correct the spelling errors of a search term. This is similar to "Did you mean..." on Google and other search engines - For PRO and ENTERPRISE plans.

- GET /trending_searches: Fetch up to 10 most recent trending search terms on the Listen Notes platform - For FREE, PRO and ENTERPRISE plans.

We've got a help page "Use Podcast Search APIs" to visually show the use case of various search endpoints.

Sort "best podcasts" in different ways 🔗

We just added a "sort" parameter to the GET /best_podcasts endpoint, so you can sort "best podcasts" in 5 different ways:

- recent_added_first (default): If Podcast A is added to the "Best Podcasts" list later than B, then A appears in front of B.

- oldest_added_first: If A is added to the "Best Podcasts" list later than B, then B appears in front of A.

- recent_published_first: If A's latest episode is published later than B's latest episode, then A appears in front of B.

- oldest_published_first: If A's latest episode is published later than B's latest episode, then B appears in front of A.

- listen_score: If A's Listen Score is higher than B's, then A appears in front of B.

For example, if you want to fetch "Best Podcasts" and sort them by popularity, then you can use:

GET https://listen-api.listennotes.com/api/v2/best_podcasts?sort=listen_score

The GET /best_podcasts endpoint fetches the same data as on the "Best Podcasts" page. So you can see the same results on our website: https://www.listennotes.com/best-podcasts/?sort_type=listen_score

Added two new parameters for GET /best_podcasts 🔗

We added two new parameters for GET /best_podcasts (available to all API users):

- publisher_region: Get "best podcasts" that are produced in a specific region.

- language: Get "best podcasts" in a specific language.

What's the difference between publisher_region and region?

Let's first recap what are "best podcasts" in the Listen Notes ecosystem: "Best podcasts" are curated by Listen Notes staffs based on various signals from the Internet, e.g., top charts on other podcast platforms, recommendations from mainstream media, user activities on listennotes.com...

"Best podcasts" in a region may not be produced in that region. For example, a USA podcast may be popular in Canada or Japan.

This new publisher_region parameter allows you to further narrow down results by the origin. Let's say you want to get "best podcasts" in Japan that are also produced in Japan (not from other countries), then you should pass region=jp and publisher_region=jp to GET /best_podcasts.

Show transcript and include/exclude multiple podcasts in search results 🔗

We just made two improvements to the API:

1) Transcript

The GET /episodes/{id} endpoint can return a "transcript" field, if the "show_transcript=1" parameter is provided.

We don't include transcripts by default, because 1) less than 1% of 90,000,000 episodes have transcripts in our database; 2) including a transcript would make the response data very big, thus slow response time.

Please check out the example response data on our API Docs page, or see how the transcript looks like on our website (Example).

How to upload transcripts to Listen Notes? If podcasters claim their podcast page(s) on Listen Notes, they can upload transcripts under the EDIT tab (Example). Or if there's a <podcast:transcript> tag in the rss feed, we'll automatically fetch the transcript (Example).

For now, transcript is available only in the PRO/ENTERPRISE plan.

2) Include/exclude multiple podcasts in search results

To search episodes (GET /search), you can include (ocid parameter) or exclude (ncid parameter) results from up to 5 specific podcasts in a single query.

Use case: You search a podcaster's name and want to exclude search results from his/her shows. You can test on our website: https://lnns.co/lBkCy2bWE05

This is available for all plans, including FREE.

Added "listen_score" and "listen_score_global_rank" fields for PRO users 🔗

We recently launched Listen Score, a metric that shows the estimated popularity of a podcast compared to all other rss-based public podcasts in the world on a scale from 0 to 100. It's like Nielsen ratings for podcasts. With Listen Score, you can get a rough sense how popular a specific podcast is.

Now, we added "listen_score" and "listen_score_global_rank" fields to all podcast-related responses - only available to PRO users. You can find the description of these two fields under the Schema section of the API Docs.

You can learn more about Listen Score on this page.

Add coworkers to your API account 🔗

You are able to add coworkers to your API account now. They'll have separate login credentials. You don't need to share your login info with others any more.

Basically, your team members will have read-only access to the dashboard. They can see stats, logs, and API tokens, and they are able to test webhook. But they won't see billing info and make changes (e.g., reset API tokens) - in the future, we may allow to have multiple admins.

It's under the TEAM tab on the API dashboard page:

Playlist API and other improvements 🔗

We just made a few improvements to Listen API. There are not any breaking changes. You don't need to do anything on your end, unless you want to use these new things:

1. Playlist API

We added two endpoints to fetch Listen Later playlists, which is one of the most frequent feature requests :) Many people want to use Listen Later as a headless CMS to manage curated podcasts / episodes in their apps or websites. Now, with the new Playlist API endpoints, you are able to fetch Listen Later playlists.

- GET /playlists/ : Fetch your playlists. Basically it returns those playlists on your Listen Later page when you are logged in.

- GET /playlists/{id} : Fetch episodes or podcasts of a specific playlist. For example, GET /playlists/m1pe7z60bsw returns episodes on this page. You are able to fetch any of your playlists and other people's public/unlisted playlists.

2. New parameters for podcast search

We added two parameters to GET /search: episode_count_min and episode_count_max. You are able to search podcasts with certain number of episodes.

3. Schema change for simplified episode objects

We added a "podcast" field to simplfied episode objects, which are in the response of these endpoints:

- GET /search

- POST /epoisodes

- GET /just_listen

- GET /episodes/{id}/recommendations

The schema is backward compatible. We keep all the "podcast_*" fields for existing users. You don't have to do anything on your end, unless you want to use podcast artwork images.

All "image" and "thumbnail" fields in episode objects are episode-specific images - <itunes:image> tag for an <item> in the rss feed. In some cases, episodes may have low-resolution images and you may prefer using those high-resolution podcast artwork images.

A New Endpoint and Webhooks 🔗

We made two improvements recently:

First, we added a new endpoint DELETE /podcasts/{id} to delete a specific podcast. This is primarily for podcast hosting services (e.g., Buzzsprout, Anchor...) to help their users (i.e., podcasters) delete podcasts from the Listen Notes database. As we all know that the podcast industry is very fragmented, if podcasters want to delete their own shows from the Internet, they have to manually contact many podcast directories. It would be nice that a podcast hosting service allows podcasters to push a button and delete their shows everywhere. Listen Notes can set a good example for other podcast directories. Let's start with this new API endpoint.

Second, we added webhook support for two endpoints: POST /podcasts/submit and DELETE /podcasts/{id}. Of course, we have to review new podcast submissions and podcast deletion requests, otherwise any random people (or bots) on the Internet can delete anything from the Listen Notes database :) If you are a podcast hosting service and use these two endpoints, you can set up webhooks in the dashboard to get notified once your sumission / deletion requests are approved.

If you are not a podcast hosting service, you are welcome to integrate POST /podcasts/submit in your app. Imagine that your users can't find certain podcasts in your app. They should be able to submit the rss feed directly from your app.

What happen if you (or your users) see some podcast metadata errors (e.g., broken audio, changed rss feeds...)? You can report to us via hello@listennotes.com or use self-service web tools to help fix those errors. We rely on the community to help keep the podcast database up-to-date, which is similar to how Goodreads relies on 110,000+ volunteers to help improve book metadata in their database.

Pagination support for latests episodes of POST /podcasts/ 🔗

If you are a PRO user, you can use the POST /podcasts/ endpoint, which allows you to fetch latest episodes of up to 10 podcasts in one API call.

We just added a next_episode_pub_date parameter to paginate through latest episodes. You will pass the value of the next_episode_pub_date field from the response of last request. Please see the documentation for details.

Added "top_level_only" parameter to GET /genres 🔗

Genres are hierarchical. A genre (e.g., Business) can have multiple sub-genres (e.g., Entrepreneurship, Investing, Management, Marketing...).

By default, the GET /genres endpoint returns a long list of all genres. But you may want to display only top level genres. Previously, you had to build a tree data structure (w/ parent_id) from the data returned from GET /genres.

Now, we added a "top_level_only" parameter to make things easy: GET /genres?top_level_only=1 will give you just top level genres.

Added "link" and "website" fields to the API response 🔗

We just added "link" and "website" fields to the API response - "link" for episodes, and "website" for podcasts. The data for both fields are from the <link> tag in the RSS feed, which are likely official web pages of episodes or podcasts. Please read the documentation for the data schema.

If you are looking for social accounts or other urls of a podcast, you can use the "extra" field in the API response (for podcasts), which is available for the PRO user.

Some API improvements. No action is required on your end. 🔗

[You can read the detailed version of this announcement on our blog]

Better documentation

Recently, we made OpenAPI spec for our API documentation. You can take a quick look at Listen API’s OpenAPI spec in YAML or JSON. We generate the main documentation page from the standardized OpenAPI spec. Specifically, you can see the API response schema (e.g., data type, example value, default value, description…) on the page.

Furthermore, we embed Runkit widgets on the main documentation page to make it easy for you to test API endpoints and preview the actual response.

Better performance

We did some infrastructure improvements (e.g., code optimization, server upgrade…) and reduced the average API response time from ~100 ms to ~85 ms. You can always go to our status page to monitor the realtime health of Listen API.

Bigger thumbnail images

We made the thumbnail images bigger (the “thumbnail” field in the API response)! Previously the thumbnail image was 150x150, which was very blurry on modern smartphone screens :) Now the image is 300x300.

Fetch up to 10 latest episodes for multiple podcasts with one API request 🔗

We just added a new parameter show_latest_episodes to POST /api/v2/podcasts (Batch fetch basic meta data for podcasts). If show_latest_episodes is 1, then the response will include a field "latest_episodes", which is a list of up to 10 latest episodes of the podcasts in this batch, sorted by pub_date_ms in an descending order.

This could be used to quickly check if there are new episodes for a list of subscribed podcasts.

Fields updates: audio_length, genres, and latest_pub_date_ms 🔗

Due to technical debt, we've got three inconsistent fields across multiple API endpoints:

1. audio_length was a numeric value (in seconds, e.g., 1234) for an endpoint, but was a human-readable string (e.g., 00:02:22) for another endpoint. Now we introduce a new field "audio_length_sec" to replace "audio_length". audio_length_sec is a numeric value (in seconds, e.g., 1234).

2. genres was an array of genre ids for an endpoint, but was an array of genre names for another endpoint. Now we introduce a new field "genre_ids" to replace "genres". genre_ids is an array of genre ids.

3. Previously we had a field "lastest_pub_date_ms" with typo "lastest". Now we introduce a new field "latest_pub_date_ms" to replace "lastest_pub_date_ms".

What happen to the old fields (i.e., audio_length, genres, and lastest_pub_date_ms)? For users who signed up before May 2, 2019, you can continue using those old fields. Your code won't break. For new users who signed up after May 2, 2019, those old fields are gone from the API response (and from the documentation) - we don't want to confuse new users :)

We would encourage you to use the new fields audio_length_sec, genre_ids, and latest_pub_date_ms. We'll completely remove the old fields (i.e., audio_length, genres, and lastest_pub_date_ms) from future major upgrades (v3, v4, v5...).

Batch fetching podcasts by iTunes ids 🔗

POST /api/v2/podcasts allows you to fetch up to 10 podcasts with one single request. In addition to using Listen Notes ids and rss urls, you can provide iTunes ids now as parameters now.

Why do people want to look up podcasts using iTunes ids? Well, some PR/ads agencies already have a list of iTunes ids and they want an easy way to get podcast meta data from these iTunes ids.

New endpoint to submit a podcast to Listen Notes 🔗

This new endpoint allows you to submit a RSS url to Listen Notes: POST /api/v2/podcasts/submit . With this endpoint, your users can directly submit new podcasts to Listen Notes from your apps or services (especially podcast hosting services).

If the RSS url exists in our database, the endpoint will return basic meta data of that podcast immediately. Otherwise, we'll review and add the podcast to our database within 12 hours.